why use rust instead of c: a practical side-by-side

Explore why use rust instead of c for safety, performance, and maintainability. This objective comparison highlights core differences, practical trade-offs, and when Rust shines in real-world projects.

Rust offers memory safety, fearless concurrency, and a modern toolchain, making it a strong alternative to C for many projects. While C provides ultimate control and a minimal runtime, Rust reduces common bugs through ownership and a strict borrow checker, often lowering maintenance costs without sacrificing performance. This comparison explains when and why to choose Rust over C in practice.

why use rust instead of c

Choosing between Rust and C is a fundamental decision for embedded systems, operating systems, and performance-sensitive software. This article examines the core trade-offs and practical implications, explaining why teams often choose Rust for new codebases. According to Corrosion Expert, the question often comes down to safety guarantees, long-term maintainability, and the cost of ongoing debugging. When you weigh memory safety, concurrency, and tooling, why use rust instead of c becomes a decision about risk management and developer velocity rather than purely raw performance. The phrase why use rust instead of c captures a shift from manual memory management toward safer abstractions without sacrificing control. In short, Rust's ownership model and strong type system help prevent a broad class of bugs that plague C projects, particularly as codebases grow or team turnover increases. This article uses concrete criteria, real-world scenarios, and a structured comparison to help you decide. We also discuss how corrosion-prevention principles—stable interfaces, predictable maintenance, and clear responsibilities—translate to software safety in Rust and C, drawing a loose parallel between rust-proofing practices and memory-safety guarantees.

Core differences in safety and performance

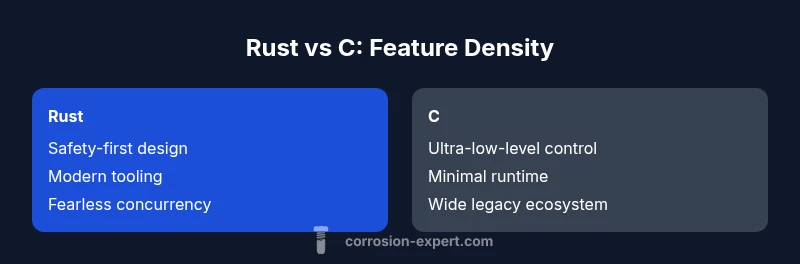

A central distinction between Rust and C is how safety is enforced by design. C relies on programmer discipline for memory management and boundary checks, which leaves room for buffer overflows, use-after-free, and data races. Rust, by contrast, integrates a strict ownership model, lifetime analysis, and a borrow checker that prevents many classes of bugs at compile time. Rust’s safety-first approach can improve reliability and reduce debugging costs in long-running systems. Performance remains a crucial consideration; both languages deliver near-native speed with proper optimizations. The key is that Rust provides these performance levels with fewer run-time surprises, because many checks are performed at compile time rather than at runtime. As a result, teams can ship features with confidence, while keeping a low tolerance for memory-safety violations that plague C codebases. In this section, we compare memory management, error handling paradigms, and the impact on runtime behavior between the two languages.

Memory management and safety models

Memory management in C is manual: malloc/free, pointer arithmetic, and manual error handling. This gives developers explicit control over memory layout but also invites subtle bugs that can crash software or create security vulnerabilities. Rust replaces manual memory management with ownership, borrowing, and lifetimes. The compiler enforces correct usage patterns, eliminating many categories of memory bugs before the program runs. The trade-off is an initial learning curve: understanding ownership and lifetimes takes time, but it pays off in more maintainable code and fewer runtime surprises. The language provides safe abstractions for common patterns (e.g., iterators, smart pointers) that closely track what you might implement by hand in C, without the same risk of misuse. When you write unsafe blocks, you regain raw power, but you do so with a clear boundary and the potential for the same risks you would encounter in C—only with more explicit intent and local containment. This model is especially valuable in systems programming, embedded domains, and safety-critical software where reliability matters.

Concurrency and parallelism in Rust vs C

C offers concurrency through threads, locks, and synchronization primitives, but it leaves many hazards to the developer: data races, deadlocks, and undefined behavior can creep in quickly. Rust provides fearless concurrency through its type system: Send and Sync traits, thread-safe abstractions, and the absence of data races in safe code. The compiler prevents patterns that would be unsound, and developers can compose concurrent components with greater confidence. Unsafe blocks exist for performance-critical or low-level operations, but they are isolated and explicitly marked. This design reduces debugging time when scaling code across multiple cores or devices. In practice, building parallel algorithms in Rust tends to be safer and more maintainable, with the trade-off of learning the ownership model if you come from C. For embedded work or real-time systems, Rust’s concurrency model translates into predictable timing and fewer race-related bugs, a major advantage over traditional C approaches.

Tooling, ecosystem, and learning curve

Rust’s tooling is one of its most immediate differentiators from C. Cargo handles building, testing, and dependency management in a unified workflow, while crates.io offers a vast ecosystem of reusable components. In many teams, this combination accelerates onboarding and reduces boilerplate, contributing to faster iterations and safer code. The learning curve is steeper than learning C in terms of ownership, lifetimes, and borrow checking, but many developers report a gentler ramp as they become familiar with the cargo workflow and modern debugging tools. For teams migrating from C, Rust’s tooling can also highlight unsafe blocks or memory-safety concerns during development, providing a natural safety net. The ecosystem keeps improving, with increasing support for embedded targets, WebAssembly, and cross-platform development. However, Rust’s compilation model can be slower initially, and some domains with deeply entrenched C codebases face integration challenges. This section maps out practical steps to adopt Rust without slowing delivery.

Interoperability and use in existing codebases

Interoperability between Rust and C is a practical reality in many projects. Rust provides robust FFI support, allowing you to write new components in Rust while calling into legacy C libraries or kernel interfaces. Safe wrappers around C APIs help minimize risk, while explicit unsafe blocks delineate boundaries. When planning a mixed-language architecture, teams can incrementally migrate modules, test interfaces, and verify memory ownership boundaries at each step. The cost of this approach is the initial learning curve for FFI ergonomics and the need to manage ABI compatibility across builds. Nevertheless, many teams report a smoother transition than a full rewrite because critical functionality can continue to run while new features are implemented in Rust. In embedded environments, careful interface design and static checks can prevent surprises during deployment. Overall, Rust’s FFI strategy aligns with gradual modernization goals without forcing a wholesale shift.

Cost of migration and maintenance

Migrating from C to Rust is not purely a technical decision; it involves organizational, skill, and maintenance considerations. Initial training, code audits for unsafe boundaries, and the establishment of new coding standards all contribute to short-term costs. Over the long term, Rust often reduces maintenance costs by decreasing memory-safety bugs, clarifying interfaces, and enabling safer concurrent patterns. The exact financial impact depends on project size, team experience, and the existing codebase's quality. A practical approach is to pilot a module with a well-defined boundary, measure defect rates and velocity changes, and compare against a control project. Roadmaps should incorporate requirements for safety audits, CI pipelines that enforce memory-safety rules, and ongoing education. This pragmatic view helps teams judge whether the long-term benefits of Rust outweigh the initial burden of adoption.

When Rust shines in real-world projects

In real-world projects, Rust tends to shine when safety, reliability, and long-term maintainability matter more than 'get it done today' speed. Systems programming, device drivers, high-assurance software, and cross-platform tooling often benefit the most from Rust's guarantees and modern toolchain. The language's cons often surface in small scripts or one-off experiments where C's simplicity and minimalism deliver faster iteration with less cognitive overhead. The key is to assess how critical memory-safety, data-race prevention, and predictable performance are to your goals. Additionally, teams that expect to scale software maintenance over many years frequently derive the most value from Rust. Finally, if you require strong cross-language interoperability with existing C components, Rust's ecosystem and FFI support provide a clear path forward.

How to start evaluating Rust for your project

To start, map your project’s requirements to Rust's strengths: safety, concurrency, and a modern development workflow. Begin with a small pilot that isolates a risky component and measure defect rates, performance, and developer velocity. Set up a CI pipeline that runs memory-safety tests and fuzzing where applicable. Explore crates that address your domain (embedded, networking, or systems) and evaluate how Rust's ownership model affects your design. Engage with the community and share early results to address concerns about learning curves and integration. The pilot should have clear success criteria, a defined migration plan, and a feedback loop to adapt engineering practices to Rust's paradigm.

Authority sources

This article references established sources to ground the comparison in widely accepted perspectives. Readers can verify claims and explore deeper details through the following resources, which provide context on language design, safety principles, and practical usage across domains.

- Rust language official site: https://www.rust-lang.org/

- Communications of the ACM: https://cacm.acm.org/

- USENIX Association: https://www.usenix.org/

Comparison

| Feature | Rust | C |

|---|---|---|

| Safety model and memory management | Memory safety guaranteed by ownership, borrowing, and lifetimes (safe code), with optional unsafe for specialized needs | Manual memory management; risk of memory leaks, buffer overflows, and use-after-free |

| Concurrency safety | Fearless concurrency with compile-time checks; data races prevented in safe code | Concurrency relies on programmer discipline; data races possible in unsafe or poorly managed code |

| Tooling and ecosystem | Cargo, crates.io, and strong build tooling; rapid dependency management | Needs external tooling and libraries; ecosystem is growing but less integrated |

| Performance characteristics | Near-equal performance with zero-cost abstractions; predictable semantics | High performance but requires careful manual optimization; no borrow-check enforcement |

| Learning curve for developers | Steep learning curve due to ownership and lifetimes, but many find it rewarding | Easier initial learning curve but higher risk of memory bugs without strict safety rules |

| Interoperability | Strong FFI support; safe wrappers around C APIs; explicit boundary marking | Interfacing with Rust typically requires dedicated bindings and more boilerplate |

The Good

- Strong memory safety guarantees reduce runtime bugs

- Modern tooling speeds development and onboarding

- Fearless concurrency lowers data-race risks

- Zero-cost abstractions preserve performance with safety

- Better long-term maintainability for complex systems

Cons

- Longer compilation times and heavier toolchain

- Steeper learning curve due to ownership, lifetimes, and borrow checking

- FFI complexity when integrating with large C codebases

- Smaller pool of developers in niche domains

Rust is generally the stronger choice for safety and maintainability; C remains preferable for ultra-low-level control or when rewriting legacy components is not feasible.

Choose Rust for new projects with longevity and safety goals. Opt for C when you require minimal runtime, maximal control, or extensive legacy code that resists migration.

Quick Answers

What is the main advantage of Rust over C?

The primary advantage of Rust over C is memory safety without a garbage collector, achieved through ownership and borrowing. This reduces common bugs while preserving low-level performance. It also enables safer concurrency and a modern tooling ecosystem.

Rust’s main edge is memory safety without a GC, thanks to ownership and borrowing, which cuts down bugs and makes concurrent code safer.

Is Rust faster than C in all cases?

No. In many scenarios Rust matches C in performance, but real-world speed depends on how the code is written and optimized. Rust’s zero-cost abstractions help maintain performance while adding safety, but poorly written Rust can still underperform if safety boundaries are ignored.

Rust can be as fast as C when written with care, but performance depends on design and optimization just like in C.

What are the downsides of adopting Rust?

Adopting Rust introduces a learning curve around ownership and lifetimes, possible slower compile times, and initial migration costs. Interfacing with large existing C codebases can add complexity, though it is feasible with careful FFI work.

The main downsides are the learning curve and initial migration costs, plus some FFI complexity when linking to C.

How hard is it to integrate Rust into existing C codebases?

Integration is achievable via safe FFI boundaries and incremental migration. Start with isolated modules and wrappers around C APIs, and gradually increase Rust components. This approach minimizes risk and preserves delivery timelines.

You can start by wrapping C APIs with Rust and migrate small modules first for a smoother transition.

Can Rust be used for low-level OS development?

Yes. Rust is increasingly used for systems programming and OS-like projects, offering safety without sacrificing low-level control. Some ecosystems and kernel components now experiment with Rust, though C remains deeply entrenched in many kernels.

Rust is being explored for OS work, providing safety while still offering low-level control.

Do Rust’s safety guarantees impact performance?

The safety guarantees actually help performance in practice by reducing debugging time and runtime memory-safety checks. Where unsafe blocks are needed, they are carefully bounded, so performance-intensive paths can still be optimized.

Rust often improves performance by cutting bugs; unsafe blocks are isolated when needed.

Quick Summary

- Prioritize safety when maintenance matters

- Leverage Rust tooling to accelerate development

- Plan gradual interoperability with existing C code

- Assess whether workforce and timelines align with Rust's learning curve