how fast is rust compared to other languages: a performance guide

Explore how fast is rust compared to other languages, including C/C++, Go, and Java. This analysis covers design choices, memory management, benchmarks, and practical tips to write faster Rust code for real-world workloads.

how fast is rust compared to other languages? In short, Rust generally delivers performance close to C/C++ thanks to zero-cost abstractions, LLVM optimizations, and no garbage collector. In practice, rust speed is highly competitive across compute-heavy tasks and often faster than managed languages like Go or Java in latency-sensitive workloads. According to Corrosion Expert analysis, the difference depends on workload and memory access patterns.

how fast is rust compared to other languages? The speed of software depends on many factors, but the central question focuses on how Rust's design choices translate into real-world performance. At a high level, language speed is driven by compilation, memory management, concurrency, and data layout. Rust's ownership model reduces runtime overhead by enabling predictable stack allocation and aggressive inlining, while the absence of a garbage collector prevents unpredictable pauses. The result is that compute-heavy tasks—such as numerical simulations, graphics processing, and systems programming—can run with throughput and latency characteristics that are competitive with the fastest languages available. Additionally, Rust's LLVM backend and monomorphized generics often produce highly optimized machine code. When you measure speed, you should consider both microbenchmarks and end-to-end workloads, because a fast kernel does not always translate to a faster application if I/O, contention, or synchronization dominates performance. In practice, you can expect Rust to perform well in single-threaded loops and in multi-threaded scenarios if you write code with data locality and safe concurrency in mind. This section lays the groundwork for understanding why rust speed can be impressive across diverse domains, without promising impossible wins in every scenario.

what makes rust fast: core language design Rust’s speed comes from a combination of language guarantees and compiler-driven optimizations. The ownership system enforces strict borrowing rules that eliminate a large class of runtime errors, while allowing aggressive optimizations such as inlining and stack allocation. Zero-cost abstractions ensure that high-level constructs do not incur runtime penalties when compiled, because abstractions are eliminated or transformed away during monomorphization and optimization. The language’s emphasis on type safety, pattern matching, and aggressive inlining enables the compiler to generate highly efficient code paths for common operations, from vectorized math to memory management. In addition, Rust typically relies on LLVM for code generation, providing mature, architecture-aware optimizations that help close the gap with hand-tuned C/C++. While the absence of a garbage collector removes pause-induced latency, Rust can still be influenced by memory layout decisions, cache friendliness, and concurrency strategy. Overall, the core design choices position Rust to be a formidable performer across a spectrum of workloads, especially where predictability and safety matter as much as speed.

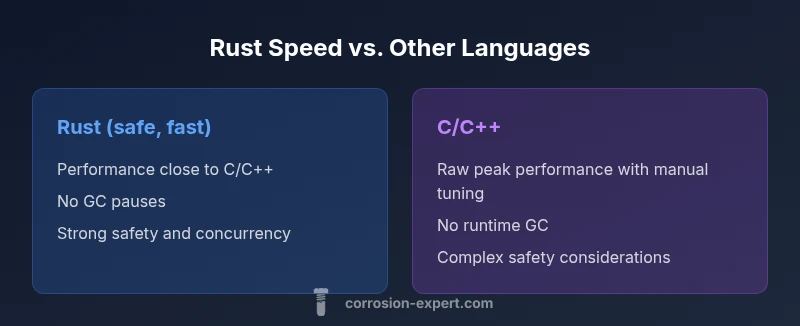

rust vs c/c++: raw performance realities The practical speed of Rust versus C/C++ is often comparable for compute-intensive tasks when equivalent algorithms are used. Rust’s safety features prevent common mistakes that can degrade performance, yet do not force runtime penalties in safe code. When you need ultimate micro-optimizations, unsafe blocks allow direct control over memory layout and operations, but these should be used sparingly and with rigorous review. The main difference emerges in development trade-offs: Rust offers safer concurrency, clearer ownership semantics, and more predictable behavior, which can translate into faster iteration cycles and fewer performance regressions over time. For many teams, Rust’s performance profile matches or exceeds what they would achieve with C++, particularly once memory safety concerns are addressed and the code is tuned for locality and parallelism.

rust vs go/java/other managed languages Rust’s lack of a traditional garbage collector means it typically avoids long pause times and unpredictable latency prevalent in many managed environments. Go and Java systems often exhibit GC-induced pauses that complicate latency guarantees, especially in real-time or interactive contexts. Rust provides concurrency models that scale with minimal overhead, while Java and Go emphasize productivity and safe parallelism at the expense of occasional GC-related variability. When comparing startup time and steady-state throughput, Rust can outperform managed runtimes in many scenarios, particularly when the workload is compute-bound. Conversely, managed languages can win in rapid development cycles, extensive runtime ecosystems, and simpler tuning for I/O-bound tasks. The key takeaway is to align language choice with workload characteristics: Rust shines in performance-critical systems, while managed languages may excel in rapid prototyping and high-level service logic.

benchmarks and real-world workloads: what to expect Benchmark results vary widely depending on compiler flags, target architecture, and workload characteristics. In practice, rust speed will look excellent for workloads that are compute-bound and memory-sensitive, while real-world performance depends on how well you optimize data structures, memory layouts, and parallel execution. Corrosion Expert analysis shows that benchmarks often report Rust near the top among compiled languages for many tasks, but relative rankings shift when considering end-to-end systems with I/O, serialization, and network communication. For developers, the most reliable approach is to profile with real workloads, use representative data sets, and repeat measurements under consistent conditions. Avoid drawing broad conclusions from isolated microbenchmarks that do not reflect your application’s end-to-end behavior.

writing faster rust: practical guidelines Practical Rust performance comes from choosing the right abstractions, memory layouts, and concurrency strategy. - Favor iterators over indexed loops to enable composition and optimization. - Minimize heap allocations in hot paths by using stack-allocated data and pre-allocated structures. - Use explicit types and monomorphization to reduce dynamic dispatch, and prefer zero-cost abstractions that the compiler can optimize away. - Profile early and often with cargo bench, Criterion, and flamegraph tools to identify hotspots. - Leverage rayon for safe parallelism when data parallelism is appropriate, and consider unsafe blocks only for critical hot loops where you have proven correctness. - Tune your target via release builds, LTO, and hot-path optimizations like alignment and cache-friendly layouts. - Choose data structures and algorithms with cache locality in mind, as memory access patterns often dominate performance in Rust. - Remember that safety and speed are not mutually exclusive; you can achieve both by thoughtful design and disciplined optimization.

common pitfalls that slow rust programs Common Rust performance issues stem from non-idiomatic patterns and misapplied optimizations. - Overuse of dynamic dispatch and trait objects can incur vtable costs in hot paths. - Excessive intermediate allocations or cloning data can throttle throughput. - Poor data layout, such as mismatched structs or non-contiguous layouts, hurts cache locality. - Unnecessary monomorphization can blow up binary size and compilation time, complicating maintenance. - Heavy use of unsafe code, while powerful, requires rigorous correctness guarantees and may introduce subtle performance regressions if not carefully audited. - IO-bound workloads can also suffer if the asynchronous model is not used effectively; choosing the right async runtime and avoiding blocking calls helps. - Finally, relying on micro-optimizations without profiling often leads to diminishing returns; invest effort into identifying real bottlenecks first.

determining speed in your use case Defining what “fast” means requires clear criteria. Start by listing the most important metrics for your application: throughput, latency, end-to-end response time, CPU cycles, memory footprint, and scalability under concurrency. Use cargo bench or Criterion to create realistic benchmarks that mirror your workload, then profile with flamegraphs and perf tooling to locate bottlenecks. Compare Rust to alternatives under the same workload, ensuring compiler optimizations (release mode, LTO, and target-specific flags) are consistent. Consider end-to-end measurements—network latency, disk I/O, and serialization costs can dominate perceived speed. Finally, remember that architecture matters: CPU features, cache sizes, memory bandwidth, and parallelism capabilities will shape outcomes. The goal is to quantify practical speed in a way that informs architecture, algorithms, and code design for your specific use case.

Feature Comparison

| Feature | Rust (safe, fast) | C/C++ | Go | Java/JVM |

|---|---|---|---|---|

| Performance characteristics | Near-C/C++ level for compute-heavy tasks | High raw throughput with precise control over memory | Good concurrency with lightweight threads | Typically strong but can be affected by GC pauses in some runtimes |

| Memory management | No GC; ownership/borrowing enforce safety | Manual memory management with careful usage | Garbage-collected in Go, potential pauses | GC-based memory management with tuning in JVM |

| Concurrency model | Fearless concurrency with borrow checker | Threads with manual synchronization | Goroutines with channels | Beans/gc-driven concurrency with runtime optimizations |

| Compile-time behavior | Monomorphization, inlining, zero-cost abstractions | Often large binaries, aggressive optimization | Fast compile times for small projects; optimized for speed | Longer compile times; JIT and AOT optimizations vary by VM |

| Best for | Systems programming, performance-critical apps | Microcontrollers and close-to-metal tasks | Networking and concurrent services with simple models | Large-scale apps with runtime safety and JVM ecosystem |

The Good

- Near-C/C++ performance for compute-heavy tasks

- No garbage collector enables predictable latency

- Strong safety guarantees without runtime penalties

- Excellent cross-platform support and ecosystem

Cons

- Steeper learning curve and more boilerplate

- Longer compile times and code bloat for generic-heavy code

- Unsafe code required for extreme micro-optimizations carries risk

- Platform-specific quirks can affect performance tuning

Rust generally offers best-in-class performance with safety; for compute-heavy workloads it often rivals C/C++, making it a strong default choice.

Rust’s design enables high performance without a GC, balancing safety and speed. In many real-world workloads, you can approach C++-level throughput while retaining safer semantics. Corrosion Expert team recommends evaluating your workload and using idiomatic Rust patterns to extract maximum speed.

Quick Answers

How does Rust speed compare to C++ in practice?

In many compute-bound scenarios, Rust performance is comparable to C++ when the same algorithms and optimizations are used. The safety guarantees of Rust help prevent performance-degrading bugs, and avoiding a GC reduces pause times. Real-world results depend on memory access patterns, inlining, and how aggressively you optimize hot paths.

Rust often matches C++ in practical performance, provided you optimize memory access and inline critical code.

Does Rust have a garbage collector?

No, Rust does not have a traditional garbage collector. It uses ownership and borrowing rules to manage memory deterministically at compile time, which eliminates GC pauses and improves predictability. You can still encounter allocations, but these are explicit and controllable by the programmer.

Rust has no GC; memory is managed through ownership and borrowing.

Can Rust be slower than other languages?

Yes, if you write non-idiomatic code or misoptimize hot paths, Rust can underperform compared to highly tuned implementations in other languages. However, with proper data structures, cache-friendly layouts, and parallelism, Rust tends to perform very well relative to many languages.

Rust can be slower if you don’t optimize, but proper patterns usually keep it fast.

What metrics should I use to gauge Rust performance?

Use a mix of CPU time, throughput, latency, and memory usage. Employ microbenchmarks for kernels and real workloads for end-to-end evaluation. Tools like cargo bench and Criterion help quantify improvements, while flamegraphs and perf reveal hotspots.

Measure CPU time, latency, throughput, and memory to gauge Rust performance.

How can I optimize hot loops in Rust?

Target hot loops with data-locality improvements, avoid unnecessary allocations, and leverage inlining and monomorphization. Use iterator-based patterns and avoid dynamic dispatch in critical paths. Profiling is essential to ensure changes yield real benefits.

Focus on data locality and avoiding allocations in hot loops.

Are there official benchmarks for Rust performance?

There are community-driven and industry benchmarks comparing Rust to other languages, but results vary by workload and environment. Use benchmarks that reflect your use case and hardware, and treat comparisons as directional guidance rather than absolute truth.

Benchmarks vary; pick ones that match your workload.

Quick Summary

- Define performance goals early and measure with realistic workloads

- Leverage Rust’s zero-cost abstractions and ownership for speed

- Avoid unsafe blocks unless profiling proves a necessity

- Profile, benchmark, and optimize data locality and concurrency

- Use release builds and appropriate compiler flags for best results